[ Update 02/19 - v0.0.3 - zkAccess + CompositeDB + Deployments]

Here’s the third update for zkAccess grant (a bit later, was meant for the 02/17 but we had to verify a few things).

Overview

- Completed the schema for zkAccess after multiple tries while understanding what can, and can not be done in CompositeDB

- Tried multiple times to deploy a Ceramic node, unsuccessfully. Ended up deciding to focus on the rest of the application instead

- Gearing towards an ETH Denver release, although I’m still missing a Ceramic node and a few features for the app to fully work.

Description

We are gearing towards the end of the application and hence the grant on my side. I’ve managed to complete the schema for our application, which ended up being a bit simpler after a few iterations. In short, for this particular demo, here’s the schema:

type Account @createModel(accountRelation: SINGLE, description: "The owner of a public key") {

owner: DID! @documentAccount

rawId: String! @string(maxLength: 64)

publicKey: String! @string(maxLength: 200)

}

type Keyring @createModel(accountRelation: LIST, description: "A list of public keys") {

owner: DID! @documentAccount

title: String! @string(minLength: 10, maxLength: 100)

keys: [String!] @list(maxLength: 1000) @string(maxLength: 200)

}

There were a few hiccups that slowed us down here. The first one was the lack of knowledge around current’s ComposeDB inability to filter (see How to query stream data? I mean is it possible to filter streams based on the data inside the stream? and Queries by fields - #8 by Sami). This meant that I had to add a DID @documentAccount directive to all my models as it would otherwise be unable to fetch the current user keyring and current credential. It’s also not so obvious how to do this, until you find this part of the documentation - Queries | ComposeDB on Ceramic.

The second drawback was not having an “append” ability into arrays, but a simply replace server mutation (e.g. update$Model). This meant that if you had any dynamic-like properties in your model, then you would need to retrieve all existing values for that particular array-like property, and then use the update mutation. Not the worst, but also not the best.

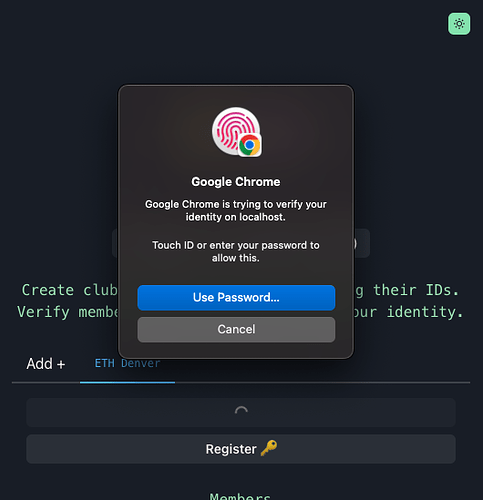

The last drawback was not related to ComposeDB, but more to webauthn. In short, we wanted to use the public key created during navigator.credentials.create later on via navigator.credentials.get, but seems like the current relay model expects the server to “store” these public keys. In our current model, this would mean our ComposeDB, which we unlinked in last update to avoid anonymity. Now we need to store the publicKey, and link it to current DID, which shows up the user’s address. Not the worst, but now it’s obvious which club contains which people. Verification is still done via zero-knowledge proofs, but that linkage is now evident to viewers of the network.

User Interface

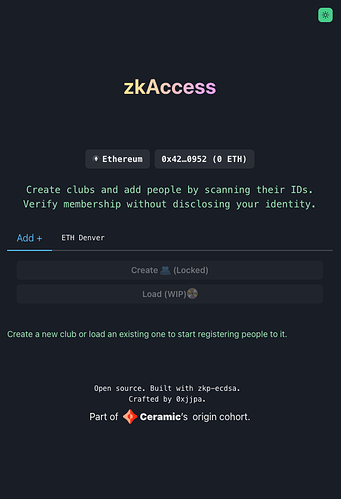

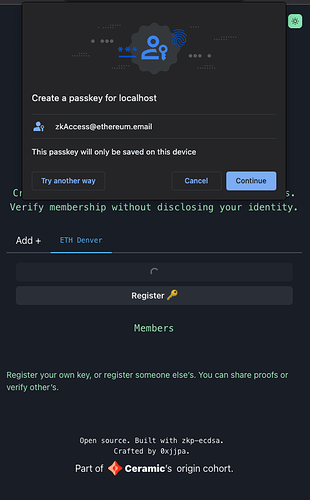

We completed our final UI, which had a bit of a refactor to accommodate ETH Denver.

For now, we are only allowing people to create a single club (“ETH Denver”) to make easier later on aggregate multiple models. This can be done after ETH Denver, but the important part is to be able to gather keys. Our final goal is to achieve a similar experience ENS gave during DevCon Bogota (see https://twitter.com/ensdomains/status/1574428620818288640) with their Leaderboard (Swag - ENS Domains)

We also spent some time to make sure it works on a desktop. We are expecting most of the user experience to be mobile, but we want to make sure it also works on people’s desktops.

We also finished an edge-case where Chrome was requiring only Yubikeys, but finally manage to also read the public key the browser or device would provide.

Next Steps

We have now completed to verify all 3 steps from the original post (i.e, we can write from an Ethereum wallet webauthn data to Ceramic, we can query this in a string-like format from ComposeDB queries, and we can restore all this data to create zero-knowledge proofs). Since we were pushing a few bleeding-edge features, we stumbled upon more walls than expected.

The next steps are simply to finish the application, and getting it ready for ETH Denver. We are expecting to require some 4-5 hours more to have it “ready to use”. Deployments for both website and Ceramic node are pending.

Blockers

-

Production Deployment (still) - I have to say that I wasted too much time to my comfort to get a Ceramic node up and running in a VPS environment. I created GitHub - 0xjjpa/zkaccess-infra: Setting up a Ceramic prod node via Terraform / Ansible using GitHub Actions which relied in the

ceramic-poc-infratemplate, and was unsuccessful to achieve a stable node. Furthermore, the Terraform script did not include a proper storage backup, so if it failed once, you would have to delete the components in your datacenter manually. You can see other efforts I did in Discord. Also, I guess there are tons ofmain netchanges and network changes atm, so sometimes my node goes kaput without giving much chance of recovery. It would be a bit of a pity to have the app ready, and just not being able to use because the Ceramic node or network would be fault. Oh well

Since the last update will be anyway next week before ETH Denver, any updates here will be done here in this post directly.